Screen Scraping Guide For Businesses

Grasping the Fundamentals of Screen Scraping

Screen scraping is a powerful technique that allows you to extract data from websites by mimicking human browsing behavior. Essentially, it involves capturing the information displayed on a web page, which can then be transformed into a structured format suitable for analysis or integration into your business applications. Unlike traditional data extraction methods that often rely on APIs or databases, screen scraping directly interacts with the web interface, making it particularly useful when data is not readily available through formal channels.

In today’s fast-paced business environment, the ability to gather insights from diverse online sources is invaluable. For instance, e-commerce platforms frequently utilize screen scraping to monitor competitor pricing, ensuring they remain competitive in the market. Similarly, financial analysts might scrape data from news sites or social media to gauge market sentiment.

The relevance of screen scraping extends across various sectors. In the travel industry, companies can aggregate flight and hotel information from multiple sources to provide users with comprehensive options. In real estate, agents can scrape property listings to keep their databases current. These examples demonstrate how screen scraping can empower your organization by providing timely data that informs decision-making and strategy.

Assessing Your Business Needs for Effective Screen Scraping

When it comes to harnessing the power of data, understanding your business needs is crucial. Screen scraping can be an invaluable tool, but it’s essential to identify specific scenarios where it can add real value to your operations. So, how do you go about this assessment?

Start by evaluating your data requirements. What types of data are you missing that could enhance your decision-making? Consider the areas of your business that could benefit from timely and accurate data. For instance, if you’re in retail, tracking competitor pricing can provide insights that help you adjust your strategies swiftly.

Next, define your screen scraping objectives. What are you hoping to achieve? Are you looking to gather market intelligence, monitor brand reputation, or maybe even automate routine tasks? Clear objectives will not only guide your scraping efforts but also set the stage for measuring success later on.

It’s also important to outline expected outcomes. What does success look like for you? Perhaps it’s a 20% increase in sales due to better pricing strategies, or maybe it’s streamlining your reporting process, saving hours of manual work. Establishing these benchmarks will help you gauge the effectiveness of your screen scraping initiatives.

Finally, don’t forget to involve your team in this process. Engaging various stakeholders can uncover additional insights into data needs and potential use cases. By collaboratively defining your objectives and expected outcomes, you can ensure that your screen scraping efforts align with your overall data strategy and drive meaningful results for your business.

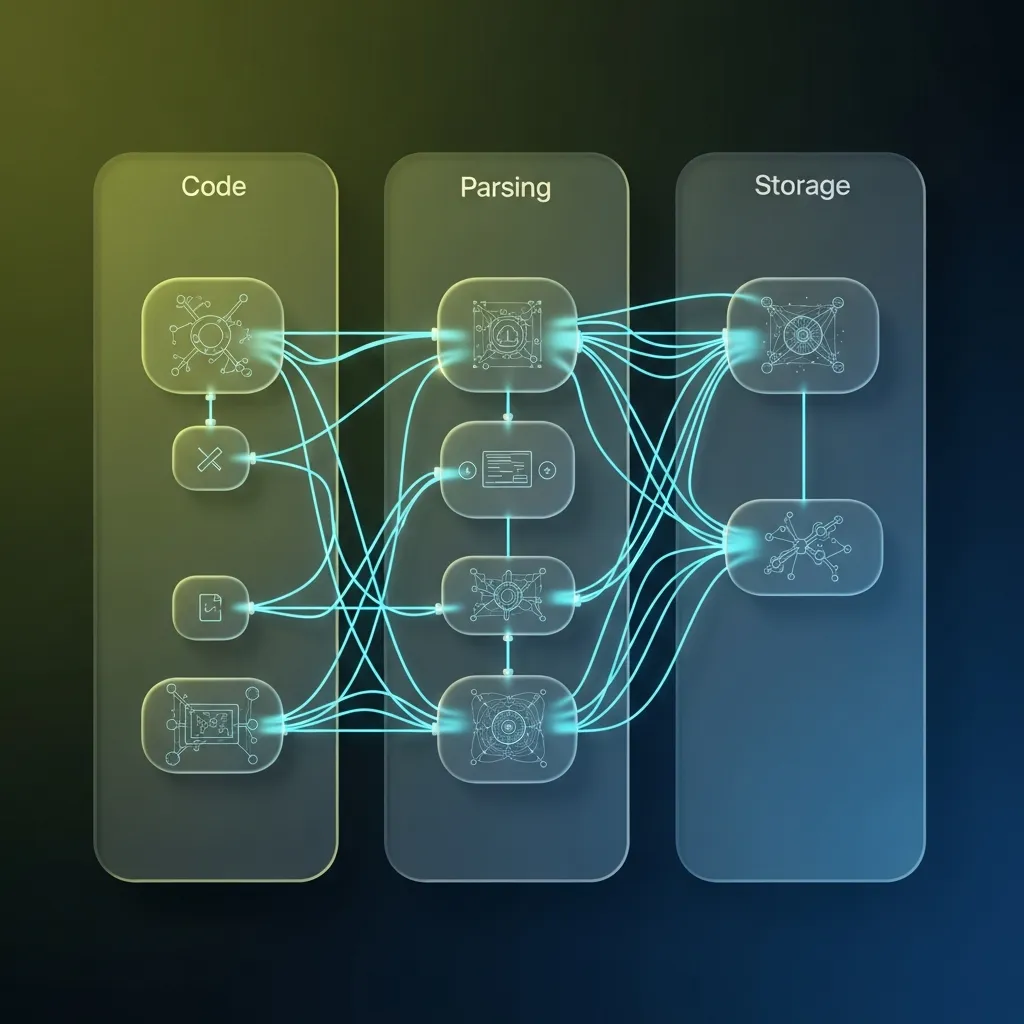

Unraveling the Technical Aspects of Screen Scraping

When diving into the world of screen scraping, understanding the underlying technology stack is crucial. It’s not just about pulling data from a website; it’s about doing it efficiently and effectively. Let’s break this down into the core components you should consider.

First, the choice of programming languages plays a significant role. Languages like Python are incredibly popular for screen scraping due to their simplicity and the plethora of libraries available. For instance, libraries such as Beautiful Soup and Scrapy allow you to parse HTML and XML documents easily, making it straightforward to navigate and extract the data you need. If you lean towards JavaScript, Node.js offers tools like Puppeteer, which can simulate browser behaviors, enabling you to scrape dynamic content rendered by JavaScript.

Next, let’s talk about the libraries and tools that can elevate your scraping efforts. Besides Beautiful Soup and Scrapy, you might encounter Requests for handling HTTP requests, and lxml for fast parsing of XML and HTML. Each of these tools has its strengths, so selecting the right combination is essential for your specific needs.

Now, let’s not overlook the infrastructure that supports scraping operations. Depending on the scale of your scraping tasks, you may need robust servers to handle multiple requests simultaneously. Utilizing cloud services can offer scalability, allowing you to adapt to fluctuating data demands without investing heavily in physical hardware. Additionally, implementing a solid database solution for storing your scraped data is vital, whether it’s SQL for structured data or NoSQL for more flexible formats.

In essence, grasping these technical aspects not only equips you with the tools needed for effective data extraction but also empowers you to make informed decisions that can significantly impact your business operations.

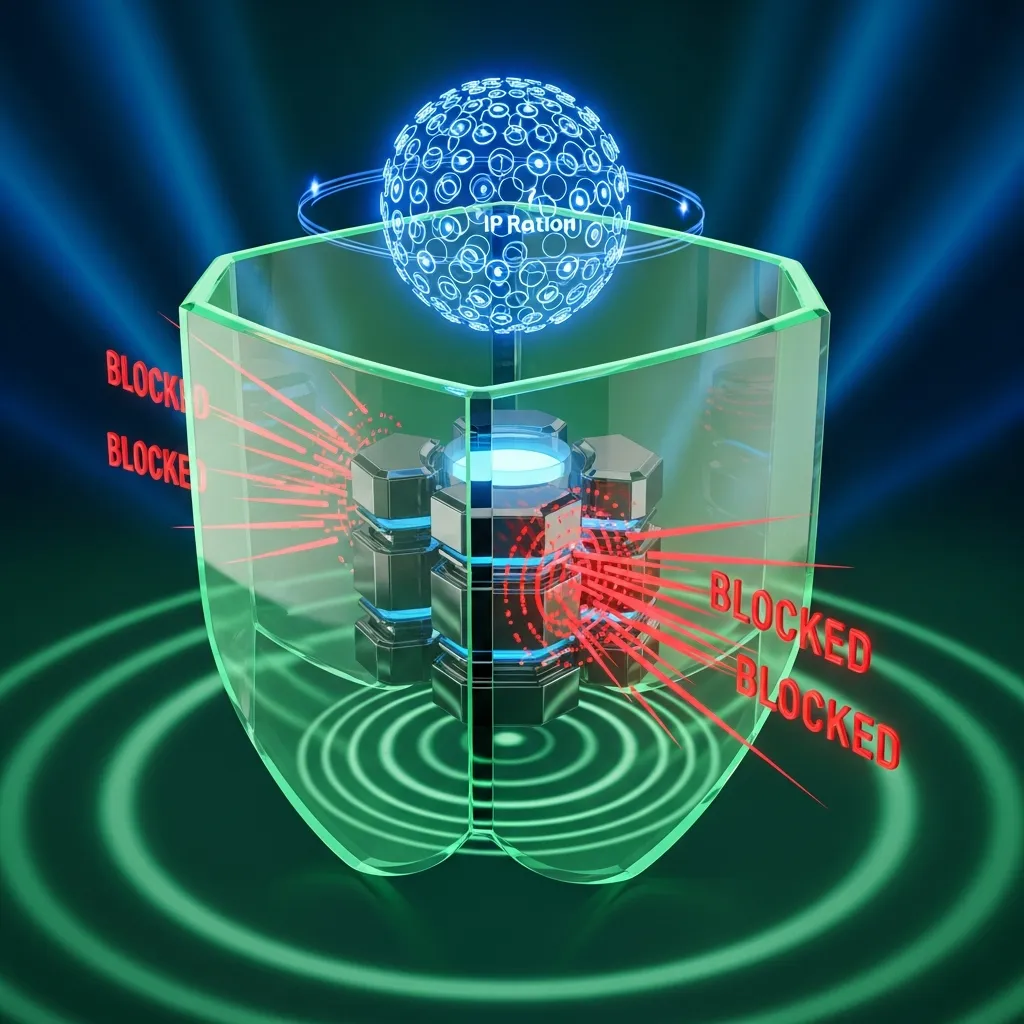

Overcoming the Common Challenges of Screen Scraping

When diving into the world of screen scraping, you might encounter a few bumps along the way. These challenges can pose significant roadblocks to your data extraction efforts, but with the right strategies, you can navigate through them smoothly.

One of the first hurdles is legal considerations. Different jurisdictions have varying laws regarding data usage and scraping. To stay on the right side of the law, it’s crucial to research and understand the regulations that apply to your specific situation. Consulting with legal experts can provide clarity and help you create a compliant scraping strategy.

Next, let’s talk about anti-scraping measures. Many websites implement sophisticated technologies to detect and block scraping activities. This can feel like playing a cat-and-mouse game. However, employing a few tactics can help you stay ahead. Techniques such as rotating IP addresses, utilizing headless browsers, and respecting the website’s robots.txt file can significantly enhance your scraping success while minimizing the chances of getting blocked.

Finally, there’s the critical issue of data accuracy. Inaccurate or incomplete data can lead to poor business decisions. To ensure you’re pulling the right information, implement robust validation checks and use multiple sources for cross-referencing data. Regularly updating your scraping scripts can also help adapt to any changes in the website structure, ensuring the data remains reliable.

By proactively addressing these challenges, you can unlock the true potential of screen scraping. With a thoughtful approach, the data you gather can become a powerful asset for your business.

Building a Resilient Screen Scraping Framework

When you’re setting out to implement a screen scraping solution, the process can feel daunting. However, by breaking it down into manageable steps, you can create a robust solution that meets your business needs. Let’s explore how to effectively plan, execute, and monitor your scraping project.

Step 1: Planning

Begin with a clear understanding of your objectives. What data do you need, and how will it benefit your operations? Document your requirements and identify the sources from which you’ll scrape data. Consider the legal and ethical implications of scraping these sites, ensuring compliance with their terms of service.

Next, assess the scalability of your solution. As your data needs grow, your scraping framework should be able to adapt without significant overhauls. Plan for future expansion by choosing technologies that can handle increased loads.

Step 2: Execution

With a solid plan in place, it’s time to execute. Choose the right tools and technologies that align with your objectives. Popular libraries like Beautiful Soup or Scrapy for Python can simplify the process, but you might also consider custom solutions if your needs are unique.

During execution, focus on performance. Implement techniques such as parallel processing to increase the speed of data collection. Additionally, make sure to respect the target website’s server load to avoid being blocked. A well-implemented throttle system can help maintain a healthy relationship with the data source.

Step 3: Monitoring

Once your scraping solution is up and running, monitoring becomes crucial. Set up alerts for any changes in the structure of the target website or for any downtime. This proactive approach will help you quickly adapt to changes, ensuring data integrity and continuity.

Finally, evaluate the cost-efficiency of your solution. Regularly assess the resources being utilized versus the value derived from the data. This evaluation will help you make informed decisions about scaling or optimizing your operations.

In summary, implementing a screen scraping solution involves meticulous planning, efficient execution, and diligent monitoring. By focusing on scalability, performance, and cost-efficiency, you can create a system that not only meets your current needs but also evolves with your business demands.

Delivering Data to Clients: Optimal Formats and Storage Solutions

When it comes to delivering scraped data to clients, the choice of format and storage solution is crucial. Different formats serve different needs, and understanding these can significantly enhance data usability.

Let’s start with the formats. The most common formats I encounter are CSV, JSON, and XML. CSV files are straightforward and great for tabular data, making them easy to open in spreadsheet applications. On the other hand, JSON is a favorite for developers due to its lightweight structure and ease of integration with web applications. Then there’s XML, which, while a bit more verbose, excels in representing complex data hierarchies. Each format has its strengths, and the choice often depends on how the client intends to use the data.

Next, we can’t overlook storage options. Depending on the volume of data and client requirements, I often recommend either traditional databases or cloud storage solutions. Databases like MySQL or PostgreSQL offer robust querying capabilities, making them ideal for structured data that requires frequent updates. On the flip side, cloud storage solutions such as AWS S3 or Google Cloud Storage provide scalability and ease of access, which is perfect for large datasets or when clients need to share data across teams.

The ultimate goal is to ensure that the data is not just delivered, but is also usable. This means considering how clients will interact with the data, whether they need real-time access, or if they will perform analysis on it. Usability should always be at the forefront of our data delivery strategy, ensuring that clients can derive maximum value from the data we provide.

Assessing the Value of Screen Scraping for Your Business

When considering the integration of screen scraping solutions into your operations, it’s essential to evaluate the potential ROI these technologies can deliver. Screen scraping can be a game changer, enabling you to gather vast amounts of data from various sources quickly and efficiently. This capability can translate into significant business advantages.

Imagine being able to track your competitors’ pricing strategies or market trends in real-time. By utilizing screen scraping, you can collect this data without the need for extensive manual efforts, allowing your team to focus on more strategic initiatives. The ability to access and analyze data swiftly can lead to better decision-making and ultimately enhance your competitive edge.

Now, let’s talk about project pricing and timelines. The cost of implementing a screen scraping solution can vary based on the complexity of the data you need and the technology stack involved. However, it’s important to view this as an investment rather than an expense. A well-planned project can be executed within a few weeks to a couple of months, depending on your specific requirements. This relatively short timeline means you can start reaping the benefits sooner rather than later.

Moreover, the positive impact on your bottom line can be substantial. By automating data collection, you reduce labor costs and minimize human error. The insights gained from the scraped data can lead to improved operational efficiency and more informed business strategies. In turn, this can boost your revenue streams and enhance profitability.

In summary, evaluating the impact of screen scraping involves understanding not just the initial costs and timelines, but also the long-term benefits it can bring. By harnessing this technology, you position your business for growth and success in an ever-evolving marketplace.

Frequently asked questions

How can businesses effectively gather insights from diverse online sources?

Businesses can leverage techniques like screen scraping to extract data from various online sources, mimicking human browsing behavior to capture information displayed on web pages. This data can then be transformed into a structured format for analysis and integration into business applications, providing invaluable insights for decision-making.

What are the common legal and technical challenges encountered when extracting data from websites?

Common challenges include navigating varying legal considerations across jurisdictions regarding data usage, overcoming sophisticated anti-scraping measures implemented by websites, and ensuring the accuracy and completeness of the extracted data. Strategies like rotating IP addresses, using headless browsers, and robust validation checks are crucial.

How can one ensure the accuracy and reliability of scraped data?

To ensure data accuracy, it’s essential to implement robust validation checks, cross-reference data with multiple sources, and regularly update scraping scripts to adapt to changes in website structures. This proactive approach helps maintain data integrity and reliability for informed business decisions.

What programming languages and tools are best suited for efficient data extraction from websites?

Python is highly popular for data extraction due to its simplicity and rich ecosystem of libraries like Beautiful Soup and Scrapy for parsing HTML/XML. For dynamic content, JavaScript with Node.js and Puppeteer can simulate browser behaviors. The choice depends on the specific requirements and complexity of the scraping task.

How can a robust data extraction framework be built to adapt to growing data needs?

Building a resilient framework involves meticulous planning, efficient execution, and diligent monitoring. This includes defining clear objectives, choosing scalable technologies like cloud services, implementing parallel processing for performance, and setting up alerts for website changes to ensure data continuity and adaptability to future demands.

How can DataFlirt’s screen scraping solutions help my business gain a competitive edge?

DataFlirt’s screen scraping solutions empower your business to gather vast amounts of real-time data, such as competitor pricing and market trends, without extensive manual effort. This enables swift, data-driven decision-making, reduces labor costs, minimizes human error, and ultimately enhances your competitive advantage and profitability.

What are the typical costs and timelines for implementing a screen scraping solution with DataFlirt?

The cost and timeline for implementing a screen scraping solution with DataFlirt vary based on data complexity and technology requirements. However, a well-planned project can typically be executed within a few weeks to a couple of months, offering a relatively short time to start realizing significant ROI and operational benefits.

How does DataFlirt ensure compliance and overcome anti-scraping measures for reliable data delivery?

DataFlirt prioritizes legal and ethical compliance, researching applicable regulations and employing best practices. We overcome anti-scraping measures through advanced techniques like rotating IP addresses, utilizing headless browsers, and respecting robots.txt files, ensuring reliable and continuous data delivery for our clients.