Demystifying Web Crawlers and Their Role in Data Extraction

Web crawlers, also known as spiders or bots, are automated programs designed to browse the internet systematically. Their primary function is to gather data from web pages, making them invaluable in the realm of data extraction. Think of them as digital explorers, traversing the vast expanse of the web to collect information that can be indexed and analyzed.

When it comes to indexing, web crawlers play a pivotal role. They follow links from one page to another, cataloging content along the way. This process not only helps search engines like Google understand the structure of the web but also allows businesses to harness data from various sources. For instance, e-commerce companies often use web crawlers to monitor competitor pricing, while researchers might extract data for academic purposes.

The technical workings of web crawlers are fascinating. They start with a list of URLs to visit, known as seeds. From these seeds, they fetch web pages and analyze the content. By parsing the HTML, they identify links to other pages, which are then added to their list for future visits. This continuous loop of fetching, parsing, and following links enables crawlers to build a comprehensive map of the web.

In essence, web crawlers are crucial for any organization looking to leverage the power of web scraping. Whether it’s for market research, competitive analysis, or content aggregation, understanding how these crawlers function allows you to make informed decisions and strategize effectively. Their ability to navigate and index web pages unlocks a world of data that can drive insights and inform your business strategies.

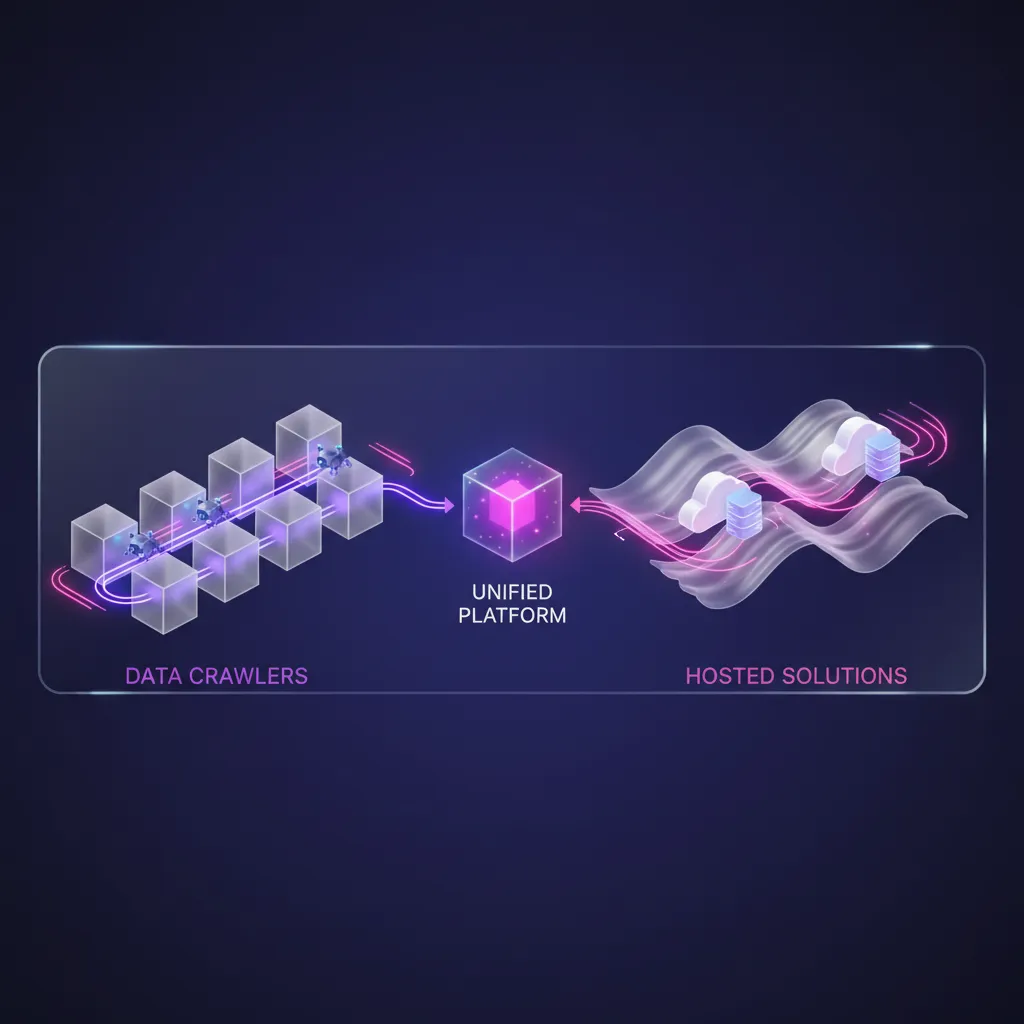

Discover the Power of Hosted Web Scraping Solutions

When it comes to data extraction, hosted web scraping solutions stand out as a practical and efficient choice for businesses looking to harness the power of data without the heavy lifting involved in managing complex infrastructure. These services allow you to focus on deriving insights from the data rather than worrying about the technicalities of data collection.

One of the key features of hosted web scraping solutions is their scalability. Whether you need to scrape a small website for a specific dataset or extract vast amounts of data from multiple sources, these solutions can adapt to your needs. Additionally, they often come with built-in cloud solutions, which means you can access your data from anywhere, at any time, with minimal downtime.

The advantages are compelling. You gain access to a team of experts who are constantly maintaining and updating the infrastructure, ensuring you have the latest capabilities at your fingertips. This level of support can be invaluable, especially if your team lacks the technical expertise to manage scraping tools internally. Moreover, hosted solutions often provide a user-friendly dashboard, making it easy for you to monitor your scraping tasks and analyze the results.

Hosted web scraping services are particularly effective in scenarios where quick data acquisition is crucial, such as market research, competitive analysis, or lead generation. They allow you to react in real-time to market changes, giving you a strategic edge over your competitors.

In summary, if you’re looking to streamline your data extraction processes while leveraging robust infrastructure and expert support, hosted web scraping solutions might just be the answer you need.

Scalability and Performance: A Comparative Insight

When it comes to web scraping, understanding the nuances between web crawlers and hosted solutions is essential for optimizing your data collection efforts. Both approaches have their strengths, but they cater to different needs depending on your specific requirements.

Web crawlers, often deployed on-premises, can be tailored to meet unique demands, allowing for high levels of customization. This flexibility can lead to exceptional performance in scenarios where you need to scrape complex websites or handle vast volumes of data. For example, if you’re working with a site that frequently changes its structure, a well-designed web crawler can adapt quickly, ensuring you continue to gather accurate data without interruption.

On the other hand, hosted solutions offer scalability that can be beneficial for businesses experiencing rapid growth or fluctuating data needs. These solutions are often built on robust cloud infrastructure, allowing them to handle increased loads effortlessly. If your organization expects sudden spikes in data collection—perhaps due to a new marketing campaign or the launch of a product—a hosted solution can dynamically scale to accommodate these demands without compromising performance.

However, each approach has its trade-offs. While web crawlers may provide greater control and customization, they require ongoing maintenance and may struggle with performance during peak loads unless properly managed. Conversely, although hosted solutions can efficiently scale, they may lack the fine-tuned control that some businesses need for specific scraping tasks.

Ultimately, the choice between web crawlers and hosted solutions boils down to your operational priorities. Understanding where each excels will empower you to make informed decisions that enhance your data collection strategies.

Assessing Cost-Efficiency and Pricing Models in Web Scraping

pricing models in web scraping” width=”1364″ height=”966″ />

When contemplating web scraping solutions, understanding the cost implications is crucial. You might wonder whether to opt for self-hosted web crawlers or leverage hosted scraping services. Both options have their merits, but they also come with distinct financial considerations.

Self-hosted web crawlers may seem appealing at first glance due to the absence of recurring fees. However, it’s essential to factor in hidden costs such as server maintenance, software updates, and potential downtime. Additionally, if your team lacks the necessary expertise, you might face increased expenses in training or hiring skilled developers.

On the other hand, hosted scraping solutions typically operate on a subscription basis, with various pricing models available, including:

- Pay-as-you-go: You pay based on usage, making it ideal for projects with fluctuating data needs.

- Monthly subscriptions: Fixed monthly fees that provide a predictable budget for ongoing scraping requirements.

- Enterprise plans: Tailored packages designed for businesses with extensive data needs, often offering additional features and support.

While hosted solutions might seem pricier upfront, they often deliver better long-term cost efficiency. With reduced overhead for infrastructure and maintenance, you can focus on extracting valuable insights from your data instead of managing the scraping process itself. Over time, the ROI can be significantly higher as you leverage the latest technologies and support without the burden of in-house management.

Ultimately, your choice should align with your business goals and budget constraints, ensuring you maximize the benefits of web scraping while keeping costs manageable.

Ensuring High Data Accuracy and Quality