The $3.4 Trillion Signal Layer: Why Cryptocurrency Data Scraping Has Become a Strategic Infrastructure Decision

The global cryptocurrency market capitalization crossed $3.4 trillion in late 2024, briefly surpassing the GDP of the United Kingdom before a correction cycle pulled it back toward $2.8 trillion in early 2025. As of Q1 2026, it is tracking above $3.1 trillion again, with institutional participation at levels that would have been unthinkable four years ago. According to industry estimates, over 560 million people globally now hold some form of digital asset, and daily trading volume across centralized and decentralized exchanges routinely exceeds $200 billion.

Yet despite this scale, the data infrastructure most crypto investment teams, product organizations, risk functions, and compliance departments actually rely on is surprisingly fragmented. Exchange APIs are rate-limited, structurally inconsistent, and in many cases undocumented. Commercial data vendors charge five and six-figure annual contracts for normalized market data that still excludes the long tail of tokens, protocols, and chains where the most interesting signals live. On-chain analytics platforms surface aggregate dashboards but rarely deliver the raw, field-level data that data teams need to build proprietary models. Social sentiment platforms scan selected channels and sell curated indexes, missing the granular, source-level signal density that genuine alpha generation requires.

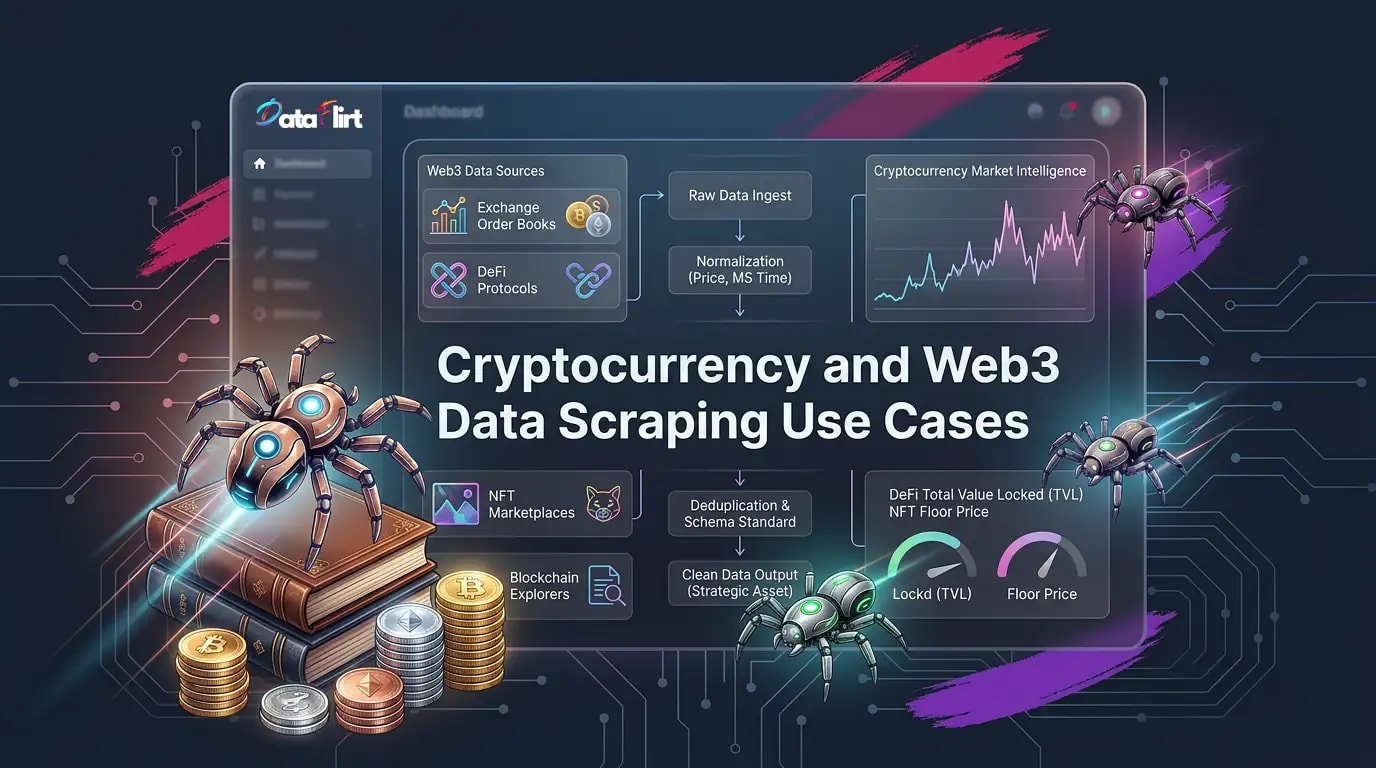

This is the intelligence gap that cryptocurrency data scraping directly addresses.

“The public web is the world’s most comprehensive, continuously updated crypto intelligence layer. Every exchange, aggregator, explorer, DeFi analytics portal, NFT marketplace, governance forum, and crypto media outlet is publishing structured market and protocol intelligence in near-real time. The competitive advantage belongs to the organizations that can systematically collect, normalize, and activate that data faster and more completely than their peers.”

The scale of publicly accessible cryptocurrency data is genuinely staggering. CoinGecko tracks over 15,000 active tokens across more than 1,000 exchanges. DeFiLlama monitors total value locked (TVL) across 270+ blockchains and 5,000+ DeFi protocols. Etherscan alone processes and surfaces billions of indexed transactions across the Ethereum mainnet, with comparable explorers covering every major L1 and L2 chain. NFT aggregators index floor prices, sales history, and trait rarity across hundreds of collections updated in near-real time. Crypto Twitter, Reddit, Telegram, and Discord generate millions of data points daily that are analytically accessible at the source level.

None of this data is locked behind institutional data agreements. All of it is publicly visible. But only a fraction of organizations operating in this space have the data infrastructure to collect it systematically, normalize it rigorously, and deliver it in formats that business, product, and risk teams can actually use.

This guide is not about building scrapers. It is about understanding what cryptocurrency data scraping actually delivers, how to define data quality requirements for your specific use case, how different roles inside your organization can extract differentiated value from the same underlying data sources, and how to make an informed decision between a one-time data acquisition project and a continuous Web3 data extraction program.

For foundational context on how data acquisition strategy connects to business outcomes, see DataFlirt’s perspective on data for business intelligence and the broader case for alternative data as a driver of enterprise growth.

The Market Context You Need to Understand Before Making a Data Decision

Before mapping cryptocurrency data scraping to specific use cases, it is worth establishing the market dynamics that make data freshness and completeness so commercially critical in 2026.

Institutional participation has fundamentally changed data latency requirements. The approval of spot Bitcoin and Ethereum ETFs in the United States in 2024 triggered a wave of institutional capital flows that raised the bar for data infrastructure across the entire market. Institutional trading desks operate on data latency standards from traditional financial markets. When they enter crypto, they bring those expectations with them. Organizations that are still operating on hourly price snapshots are making decisions against institutional counterparties who are working with sub-second data feeds.

DeFi TVL has re-expanded past $120 billion. After the contraction of 2022 to 2023, total value locked across DeFi protocols recovered through 2024 and 2025, crossing $120 billion by Q1 2026 according to on-chain aggregator data. This re-expansion has created a new class of data need: protocol-level performance benchmarking, yield rate comparison across chains, and liquidity pool health monitoring. None of this data is available through traditional financial data vendors. All of it requires Web3 data extraction from protocol analytics portals and on-chain sources.

NFT market dynamics have bifurcated sharply. The 2021 to 2022 NFT market was broadly correlated: everything moved together. The current market has bifurcated into a small number of blue-chip collections with genuine price discovery, a mid-tier of project-specific dynamics driven by utility and community, and a long tail of low-liquidity collections with thin trading. Understanding which category a collection occupies, and how quickly it can transition between them, requires granular blockchain data collection at the collection and token level, not the aggregate market level.

Regulatory pressure is creating compliance data demand that did not exist two years ago. The EU’s Markets in Crypto Assets regulation (MiCA) implementation through 2025 and 2026 has imposed compliance data requirements on European crypto businesses that go far beyond what existing commercial data vendors were built to provide. Travel Rule compliance, sanctioned address screening, and transaction monitoring for crypto service providers now require structured, auditable blockchain data collection at a scale and depth that internal teams struggle to build without external data infrastructure.

Token proliferation has made completeness an acute problem. Over 15,000 tokens are tracked by major aggregators, but the actual number of tradeable tokens across all chains and DEXs is substantially higher. Most commercial data vendors have coverage thresholds below which tokens are simply not tracked. For funds and platforms operating in mid-cap and small-cap token markets, cryptocurrency data scraping of long-tail exchange and DEX data is not optional; it is the only way to maintain analytical coverage of the universe they operate in.

Who Is Actually Reading This Data: Role-Based Intelligence Needs

The same underlying cryptocurrency data scraping infrastructure can serve radically different organizational functions depending on how data is processed, structured, and delivered to each team. Designing a data acquisition program that serves the organization rather than a single workflow requires mapping these role-based needs explicitly before any collection architecture is defined.

The Quant Analyst and Systematic Trader

Quant analysts and systematic traders are the most latency-sensitive audience in the crypto data ecosystem. They need granular, high-frequency market data, order book snapshots, trade history, funding rates, and derivatives market structure data to build and validate trading signals, backtest strategies, and monitor live model performance.

For a quant team, cryptocurrency data scraping fills the gaps that official exchange APIs leave open: historical data completeness beyond the rolling window that most APIs provide, cross-exchange normalization that eliminates the field naming and timestamp inconsistencies that corrupt multi-venue signal research, and long-tail token coverage that APIs from major venues do not include.

What quant teams need from scraped crypto data:

- Trade-by-trade execution data with millisecond timestamps across multiple venues

- Order book depth snapshots at configurable intervals (1-second, 5-second, 1-minute)

- Funding rate history across perpetual futures venues

- Open interest and long/short ratio data as sentiment proxies

- Cross-exchange price spread data for arbitrage signal research

- Historical token listing and delisting event data as event study inputs

- Liquidation cascade data for volatility regime modeling

Data quality standard for quant teams: Timestamp precision to the millisecond is non-negotiable. Price and volume fields must be normalized to a common denomination (USD equivalent) with explicit FX conversion documentation. Any gap in the time series must be explicitly flagged, not silently interpolated.

The Risk Manager and Portfolio Risk Team

Risk teams at crypto funds, crypto-native banks, and traditional financial institutions with digital asset exposure use cryptocurrency data scraping to build early warning systems for market stress events, monitor counterparty exposure concentrations, and maintain continuously current volatility and correlation models.

The specific signals risk teams extract from scraped crypto data are often not available through any commercial data vendor at the granularity and freshness required. Exchange wallet inflow and outflow data, derived from blockchain data collection and on-chain analytics portals, is a leading indicator of sell pressure that can precede a price move by hours. Funding rate extremes across perpetual futures venues signal overleveraged positions that historically precede sharp drawdowns. Stablecoin supply dynamics on-chain provide a real-time proxy for market-wide risk appetite that no traditional financial indicator can replicate.

What risk teams need from scraped crypto data:

- Exchange net flow data: net inflows and outflows to major venue hot wallets as an early sell pressure signal

- Funding rate extremes across major perpetuals venues flagged in real time

- Stablecoin supply changes on major chains as a risk appetite proxy

- Options market implied volatility surface data across strike and expiry dimensions

- Whale wallet transaction alerts: large-value transfers from known accumulation addresses to exchange deposit addresses

- Correlation matrix refreshes across the token universe at weekly cadence for portfolio risk model inputs

- Liquidation depth maps: estimated forced selling volumes at defined price levels

The Compliance Officer and Regulatory Intelligence Team

Compliance is the fastest-growing driver of blockchain data collection demand in 2026. MiCA implementation, Financial Action Task Force (FATF) Travel Rule requirements, OFAC sanctions screening obligations for US-connected entities, and the expanding scope of anti-money laundering (AML) frameworks across jurisdictions have created compliance data requirements that existing commercial screening tools were not built to satisfy at the depth that regulators now expect.

Cryptocurrency data scraping for compliance teams is categorically different from market intelligence scraping. The primary data requirement is not price or volume but structured, auditable, linkage-quality data: wallet address mapping, transaction graph data, exchange address identification, and entity attribution for known actors in the on-chain ecosystem.

What compliance teams need from scraped blockchain data collection:

- Exchange deposit and withdrawal address databases, continuously updated as venues add new addresses

- Known mixer and obfuscation service address lists from blockchain analytics forums, security research publications, and regulatory disclosures

- Governance and DAO participant wallet lists for entity attribution in DeFi-native compliance contexts

- Sanctioned entity address lists scraped and normalized from OFAC, EU consolidated list, and HM Treasury publications

- Transaction volume and flow patterns for VASP (Virtual Asset Service Provider) due diligence

- Token project team wallet identification for insider trading detection in early-stage token markets

Critical note for compliance data programs: Blockchain data collection for compliance purposes operates under specific regulatory frameworks in each jurisdiction. Any compliance data scraping program must be designed in consultation with legal counsel and must include explicit data governance documentation, audit trails, and retention policies aligned to applicable AML and data protection regulations.

The Product Manager at a Crypto Exchange, DeFi Protocol, or Web3 Platform

Product managers building crypto trading platforms, DeFi yield aggregators, NFT marketplaces, portfolio trackers, or Web3 analytics tools rely on crypto market intelligence derived from competitive data as a core product input. Their needs are structural and comparative: how are competing products pricing their services, what features are they surfacing to users, how are protocol metrics evolving on competing chains, and where are the feature and coverage gaps that represent product opportunities?

Web3 data extraction for product managers is a genuinely underappreciated use case. It is not just about the token price; it is about the platform behavior, the incentive structure, the user experience signals, and the protocol health metrics that define competitive positioning in a market where users switch platforms with near-zero friction.

What product managers need from scraped crypto market intelligence:

- Competitor fee structures: trading fees, withdrawal fees, maker/taker spreads, and staking commission rates scraped from exchange fee schedule pages and DeFi protocol documentation

- Feature comparison data: which chains and tokens competing platforms support, updated continuously as they add coverage

- Protocol TVL and user count benchmarking across DeFi competitors on the same chain

- DeFi yield rate comparison: current APY and APR rates across competing lending protocols and liquidity pools

- NFT marketplace seller fee benchmarking and royalty policy tracking

- Token listing timelines on competing exchanges as a competitive intelligence signal for listing prioritization

- Community growth proxies: Discord member counts, Reddit subscriber trends, and governance participation rates scraped from public community pages

The Data Lead and Analytics Architect

Data leads at crypto organizations are the infrastructure layer that every other function depends on. They own the models: pricing models, risk models, liquidation cascade simulators, valuation frameworks for DeFi positions, and ML-based anomaly detectors for compliance and security. The quality of everything they build is determined by the quality of the data they receive.

For data leads, the primary concern with scraped cryptocurrency data is not what sources are covered but how the data quality pipeline is designed: schema consistency across incompatible sources, timestamp normalization across venues in different time zones with different precision standards, price normalization to a common base currency, and deduplication of tokens that trade under different tickers on different chains.

A token that trades as WETH on Uniswap, ETH on centralized exchanges, and appears in DeFi protocol TVL calculations as both ETH and Wrapped ETH generates significant data quality complexity if those representations are not resolved to a canonical token record before the data reaches the analytics layer.

For data leads, the most critical decision in a cryptocurrency data scraping program is not which sources to collect from but how the normalization and quality pipeline is designed. DataFlirt’s approach to this challenge follows a four-layer architecture described in detail in the data quality section below.

The Growth and Marketing Team at a Crypto or Web3 Company

Growth teams at crypto exchanges, DeFi protocols, NFT platforms, and Web3 infrastructure companies use scraped crypto market intelligence in ways that are structurally different from their analytical counterparts. They are not asking “what is the market doing?” They are asking “where is the audience, what are they talking about, and how do we position ahead of the next narrative cycle?”

Cryptocurrency data scraping for growth teams centers on three capabilities: community intelligence (what is being discussed, by whom, and on which platforms), competitive positioning data (how competitors are structuring their acquisition and retention narratives), and token ecosystem monitoring (which projects are gaining momentum and could represent partnership or co-marketing opportunities).

What growth teams need from scraped crypto data:

- Social mention volume and sentiment trends across crypto Twitter, Reddit communities (r/CryptoCurrency, r/ethereum, r/defi, project-specific subreddits), and Telegram public channels

- Influencer activity mapping: which wallets and accounts are actively promoting or criticizing specific tokens and protocols

- Community growth velocity data scraped from public Discord invite pages, Telegram group member counts, and Reddit subscriber data

- Competitor airdrop and incentive program tracking: what token distribution mechanisms are competing protocols running and what participation thresholds are they setting

- Token narrative clustering: which themes are dominant in crypto media coverage and community discussion at any given moment

- New project launch monitoring: token presale announcements, IDO listings, and new DEX pair creation as early signals of emerging narratives

What Cryptocurrency Data Scraping Actually Delivers: The Complete Data Taxonomy

Cryptocurrency data scraping is not a single activity. The data extractable from public crypto web sources spans an enormous range of types, each with distinct utility for different business functions. Understanding this taxonomy is the first step toward specifying a data acquisition program that actually serves your needs.

For context on how large-scale data collection challenges are managed in production environments, see DataFlirt’s overview of large-scale web scraping and data extraction challenges.

Market Price and Volume Data

The foundational layer of cryptocurrency data scraping: spot price, 24-hour trading volume, market capitalization, circulating supply, fully diluted valuation, price change percentages across multiple timeframes, and bid/ask spread data. This data is available across aggregator platforms that consolidate price feeds from hundreds of exchanges, providing a normalized view of multi-venue price discovery.

The analytical value of aggregator-sourced price data is in its breadth and comparability: you can track price behavior for 15,000+ tokens in a single structured dataset. The limitation is that aggregator data loses the venue-level granularity that quant teams and arbitrage researchers need. For those use cases, direct exchange order book scraping is required alongside aggregator data.

Volume and coverage dimensions:

- Number of trackable tokens: 15,000 to 20,000+ across major aggregators

- Number of exchanges covered: 500 to 1,000+ including CEXs and DEXs

- Data refresh cadence: 60 seconds on most aggregators, with some providing 30-second or real-time feeds

- Historical depth: up to 5 years on established tokens; limited for newer tokens by their listing date

Order Book and Exchange Microstructure Data

Order book data is the most analytically valuable and technically demanding category of cryptocurrency data scraping. Level 2 order book snapshots, capturing bid and ask depth at each price level, provide the raw material for market microstructure research, liquidity analysis, slippage modeling, and institutional trade execution optimization.

This data is not available from aggregators. It requires direct collection from individual exchange endpoints, normalized across the different schema conventions that each venue uses. A centralized exchange might express order book depth as an array of price-quantity pairs. A decentralized exchange might express it as pool reserves and a pricing curve function. A derivatives venue might express it as a combination of spot order book and funding rate. These represent the same underlying concept, liquidity available at price, but require fundamentally different normalization logic.

What order book data unlocks:

- Bid/ask spread modeling across venues for execution quality benchmarking

- Liquidity depth analysis for large-position sizing without market impact

- Order book imbalance signals as short-term directional predictors

- Hidden liquidity detection through order flow analysis

- Wash trading detection: anomalous volume patterns relative to order book depth

DeFi Protocol Metrics

DeFi data is one of the most rapidly evolving categories of Web3 data extraction, and one where the gap between what commercial vendors provide and what sophisticated users need is widest. Total value locked by protocol and by chain, liquidity pool reserves and utilization rates, lending protocol supply and borrow APYs, governance token distribution and voting participation, and protocol revenue metrics are all publicly available from analytics portals and on-chain sources.

The complexity of DeFi data scraping lies in the structural diversity of the source data. A lending protocol, an automated market maker, a liquid staking provider, and a yield aggregator are all classified as DeFi but expose fundamentally different data models. A comprehensive DeFi data extraction program requires source-specific schema mapping for each protocol category, not a universal field template.

Key DeFi metrics available through cryptocurrency data scraping:

- TVL by protocol, by chain, and by token denomination

- Pool liquidity depth and concentration ratios for DEX AMMs

- Lending rate spreads: borrow APY minus supply APY across competing lending markets

- Governance vote participation rates and outcome distributions

- Protocol revenue: trading fees generated, distributed to token holders versus retained in treasury

- Token incentive emissions schedules and annualized incentive costs relative to protocol revenue

- User count proxies derived from unique wallet interactions per protocol per week

NFT Market Data

NFT market intelligence through blockchain data collection and marketplace scraping covers: floor prices by collection, sales volume by collection and trait category, rarity score distributions, average sales price trends over rolling time windows, listing-to-sales conversion rates, wash trading indicators, and wallet concentration metrics that signal centralized control of a collection’s apparent market activity.

The analytical differentiation in NFT data is at the trait and token level. Collection-level floor price data is widely available and provides minimal edge. Trait-level floor price tracking, rarity-adjusted value estimation, and buyer/seller wallet behavior analysis are the data layers that actually support investment decisions in NFT markets.

NFT data dimensions that matter for business decisions:

- Floor price by collection with 1-hour, 24-hour, and 7-day delta

- Sales count and volume by collection at the same cadences

- Trait-level premium: price premium for rare traits versus floor tokens

- Listing depth at various price levels above floor as a supply pressure indicator

- New listing velocity: rate of new listings entering the market as an early sell-pressure signal

- Holder concentration: percentage of supply held by top 10, top 100, top 1,000 wallets

- Wash trading probability score based on buyer/seller address reuse patterns

On-Chain Behavioral Signals

On-chain data derived from public blockchain explorers and analytics portals represents the most unique category of cryptocurrency data scraping: signals that have no equivalent in traditional financial markets. Exchange net flow data, whale wallet tracking, miner and validator behavior, stablecoin supply dynamics, and cross-chain bridge flow data are all extractable from public sources and provide genuine predictive signal for both market direction and risk events.

High-value on-chain signals for business teams:

- Exchange inflow/outflow: net BTC or ETH moving to or from exchange deposit wallets as a selling intent indicator

- Stablecoin supply growth: new USDC and USDT minting as a proxy for fresh capital entering the market

- Whale accumulation: large-wallet addresses increasing their holdings during price weakness as a contrarian signal

- Miner/validator outflows: transfer of block rewards to exchanges as a selling pressure indicator

- Bridge flow data: cross-chain capital movement as an indicator of which ecosystems are attracting new capital

- Long-term holder supply: percentage of Bitcoin supply unmoved for more than 1 year as a market cycle indicator

Token Project Intelligence

Token-level intelligence beyond price and volume covers the data points that inform investment due diligence, listing decisions, and risk assessments for specific projects: token unlock schedules scraped from project documentation and vesting contract data, development activity metrics from public repositories, audit report status from security firm publication pages, team wallet transaction behavior, and tokenomics structure from whitepaper and protocol documentation sources.

Token project data available through cryptocurrency data scraping:

- Vesting schedule: team and investor token unlock dates and quantities

- Development activity: commit frequency, contributor count, and open pull request volume from public repositories

- Audit status: completed and pending audit reports from security firm publication pages

- Treasury wallet balances and spending rates scraped from on-chain data and project transparency reports

- Partnership announcement timing relative to price movements for event study research

- Token concentration: top holder wallet analysis for centralization risk assessment

Crypto Sentiment and Media Intelligence

Sentiment data from crypto-native media, social platforms, and community channels is the most volume-intensive category of cryptocurrency data scraping, and one of the most valuable for growth, product, and risk teams. News article publication frequency and sentiment polarity by token, Reddit post volume and vote ratios by project, Twitter/X mention count and engagement metrics, and Telegram group activity levels all contribute to a composite sentiment signal that has demonstrated predictive value for short-term price volatility and narrative cycle timing.

A critical nuance: raw mention volume is not the same as actionable sentiment signal. A spike in negative mentions that are predominantly coming from bot accounts is not the same signal as a spike in negative mentions from high-credibility accounts with demonstrated track records. Effective crypto market intelligence from sentiment data requires source quality weighting, not raw count aggregation.

One-Off vs. Periodic: Two Completely Different Strategic Modes

One of the most consequential decisions in commissioning a cryptocurrency data scraping program is choosing between a single data acquisition exercise and an ongoing, continuously refreshed data feed. These are not variations on the same product. They serve different business decisions, require different technical architectures, and have different cost and time-to-value profiles.

For foundational context on continuous data delivery infrastructure, see DataFlirt’s breakdown of best real-time web scraping APIs for live data feeds.

When One-Off Cryptocurrency Data Scraping Is the Right Choice

One-off scraping serves business questions that have a defined answer requiring a point-in-time dataset. The analytical value of the data decays at a rate determined by the velocity of the market being studied, but for specific use cases, a well-documented, high-quality snapshot is exactly the right tool.

Token Due Diligence: Investment teams conducting due diligence on a token or DeFi protocol need a comprehensive point-in-time snapshot: current tokenomics, holder distribution, liquidity depth, protocol revenue metrics, team wallet behavior, and competitive positioning data. This is a classic one-off use case. The information is needed at a specific moment to support a specific investment decision.

Protocol Competitive Analysis: A DeFi product team evaluating a new feature or chain expansion needs a systematic landscape analysis: what competing protocols are deployed on the target chain, what TVL and user metrics are they posting, what fee and yield structures are they running, and where are the product gaps. This is a one-time research mandate with a defined answer.

Market Structure Research: A research team publishing a report on the state of DeFi lending or NFT market liquidity needs a comprehensive, well-documented dataset at a specific reference date. Reproducibility and data provenance documentation are the primary requirements, not continuous freshness.

Exchange Listing Intelligence: A token project evaluating which exchange to target for a listing needs a cross-exchange analysis of listing fees, trading volume by asset category, maker/taker spread standards, and token vetting criteria. This is a one-off strategic research exercise.

Investment Thesis Validation: A fund building a thesis around Layer 2 adoption, real-world asset tokenization, or decentralized physical infrastructure needs a comprehensive data snapshot to validate or refute the thesis before committing capital. One-off cryptocurrency data scraping at a defined date supports this exercise cleanly.

| Dimension | One-Off Requirement |

|---|---|

| Coverage | Maximum breadth across all relevant sources |

| Depth | Maximum field completeness per record |

| Accuracy | Cross-validated against secondary sources |

| Documentation | Full provenance: source URL, scrape timestamp, schema mapping |

| Delivery | Structured CSV, JSON, or database load within defined SLA |

When Periodic Cryptocurrency Data Scraping Is Non-Negotiable

Periodic scraping is the correct architecture when your business decision is a function of how the market or protocol is moving, not where it stands at a single moment. If your use case requires trend data, velocity signals, or the ability to react to market changes in hours rather than weeks, periodic Web3 data extraction is not optional.

Real-Time Price and Volume Monitoring: Any trading operation, risk monitoring function, or portfolio management workflow that requires current market data is a periodic scraping use case. The minimum viable refresh cadence is determined by the decision latency your function can tolerate. For systematic trading, that may be seconds. For portfolio risk monitoring, it may be hourly. For strategic market intelligence, it may be daily. Overspecifying cadence adds cost without adding analytical value; underspecifying it creates decision risk.

DeFi Yield Rate Tracking: DeFi lending rates and liquidity pool APYs can change dramatically within hours as capital flows respond to protocol incentives. Any DeFi yield strategy that is not backed by continuously refreshed rate data is operating on stale information. Yield rate monitoring requires at minimum an hourly refresh cadence; in high-velocity incentive environments, 15-minute refreshes are justified.

NFT Floor Price Surveillance: NFT floor prices in active collections can move 20 to 30% within a trading session. Floor price surveillance for investment or risk management purposes requires a minimum daily refresh cadence; for active collections during market events, hourly or real-time monitoring is the correct architecture.

Sentiment and Narrative Monitoring: Crypto narrative cycles move fast. A token can go from obscure to trending in hours based on a viral post or a celebrity endorsement. Growth and risk teams that need to track narrative momentum require continuous sentiment scraping across key channels, typically with a 4 to 8 hour refresh minimum for strategic purposes and hourly for risk-sensitive monitoring.

Compliance and Sanctions Screening: Regulatory obligations for continuous transaction monitoring and sanctions screening require continuously updated reference data. Exchange address databases, mixer service address lists, and regulatory sanctions publications must be refreshed at least daily, and in some regulatory frameworks, within hours of a new designation.

AVM and Pricing Model Maintenance: Machine learning models used for token valuation, DeFi position pricing, and NFT valuation degrade when their input data distributions drift from training distributions. Continuous model maintenance requires a continuous stream of fresh training data. This is an indefinite periodic scraping commitment, not a one-time exercise.

| Use Case | Recommended Cadence | Rationale |

|---|---|---|

| Systematic trading signal | Sub-minute to 1-minute | Signal decay is near-instantaneous |

| Risk monitoring: exchange flows | Hourly | Leading indicators have short lead times |

| DeFi yield rate tracking | 15 minutes to hourly | Rates respond quickly to capital flows |

| NFT floor price surveillance | Hourly to daily | Active collections move within sessions |

| Sentiment and narrative monitoring | 4 to 8 hours | Narrative cycles build over hours |

| Portfolio benchmarking | Daily | Sufficient for strategic decision cadence |

| Compliance address screening | Daily | Regulatory refresh requirements |

| Token due diligence refresh | Weekly | Background monitoring of existing positions |

| Market structure research | Monthly | Strategic rhythm for structural analysis |

Role-Based Data Utility in Depth

This is where strategic decisions get made. The same underlying cryptocurrency data scraping infrastructure, collecting price data, on-chain signals, DeFi metrics, and sentiment data from public sources, will generate entirely different analytical value depending on how data is processed and delivered for each team. Here is the detailed breakdown.

Quant Analysts: From Raw Trade Data to Signal Alpha

The data need in practice: A quant team building a mean reversion strategy across crypto perpetual markets needs sub-minute funding rate data across 20+ venues, normalized to a common denomination, with gaps explicitly flagged. They are not building dashboards. They are building vectorized signal libraries that process millions of rows of time series data. Data quality failures at the field level propagate directly into backtesting corruption and live signal degradation.

What cryptocurrency data scraping adds beyond official APIs: Official exchange APIs for historical data typically provide 90 to 180 days of granular trade history at most. Quant strategies require multiple years of data to achieve statistical significance across different market regimes: bull markets, bear markets, high-volatility regimes, low-liquidity environments, and post-liquidation cascade recovery periods. Cryptocurrency data scraping of aggregator historical data and exchange tick data archives provides the multi-year, multi-venue dataset that genuine backtesting requires.

Specific data products quant teams commission:

- Multi-year hourly OHLCV (open, high, low, close, volume) datasets across 500 to 5,000 tokens, normalized to USD

- Minute-level funding rate history across perpetual futures venues for basis and carry research

- Order book depth snapshots at 1-minute intervals for liquidity impact modeling

- Cross-exchange price spread matrices updated daily for pair selection in statistical arbitrage

- Token listing event databases with precise listing timestamps for event-driven research

DataFlirt Insight: Quant teams that move from official API data to comprehensive scraped multi-venue datasets consistently report a 30 to 40% increase in the universe of testable strategies, because they are no longer limited to the historical coverage windows and venue selection of a single API provider.

Risk Teams: Building Early Warning Systems from Public Web Data

The data need in practice: A risk manager at a crypto fund needs to know when exchange inflows for a major token are accelerating before the price starts moving. They need that signal in hours, not days. No commercial data vendor provides exchange wallet flow data at the granularity and freshness that this use case requires. The data exists publicly on blockchain explorers and on-chain analytics portals. It requires Web3 data extraction, normalization, and delivery to a monitoring system.

Key risk data products derived from cryptocurrency data scraping:

- Daily exchange net flow by token: net BTC, ETH, SOL, and stablecoin flows to and from major exchange hot wallets

- Funding rate extreme alerts: threshold-based flagging when funding rates exceed defined percentile thresholds across venues

- Leverage ratio proxies: open interest relative to spot trading volume as a market-wide leverage indicator

- Stablecoin supply delta: new USDC and USDT issuance as a risk appetite proxy updated daily

- Whale movement alerts: large wallet transaction events above defined USD thresholds extracted from explorer data

Data delivery requirement for risk teams: Risk monitoring data must arrive on a defined schedule with automated quality checks. A delivery that arrives late or with missing fields is more dangerous than no delivery at all, because a risk team operating on incomplete data does not know what they are missing. DataFlirt’s delivery contracts for risk-sensitive use cases include SLA guarantees, automated quality check reports with each delivery, and escalation protocols for anomalous data quality events.

Compliance Teams: Blockchain Data Collection as Regulatory Infrastructure

The data need in practice: A compliance officer at a European crypto exchange operating under MiCA needs to screen every transaction against a current list of sanctioned wallet addresses, demonstrate to regulators that their screening data was current at the time of each transaction, and maintain an audit trail of their data provenance. This is not a commercial API use case. It is a bespoke blockchain data collection program with specific regulatory documentation requirements.

Compliance data products from cryptocurrency data scraping:

- Sanctions address database: daily-refreshed, deduplicated list of wallet addresses from OFAC SDN list, EU consolidated sanctions list, HM Treasury financial sanctions, and UN Security Council sanctions list

- Mixer and obfuscation service address sets: continuously updated from security research publications, blockchain analytics community sources, and regulatory advisories

- VASP (Virtual Asset Service Provider) wallet mapping: known exchange deposit and withdrawal addresses to support Travel Rule compliance

- Dark market and exploit address sets: wallet addresses associated with known hacks, rug pulls, and illicit marketplaces from security firm disclosures and law enforcement public announcements

Critical delivery requirements for compliance data: Every delivery must include explicit source attribution, collection timestamp, and version history. Compliance teams need to demonstrate to regulators not just that they screened transactions but that the reference data they screened against was current and complete. Data provenance documentation is as important as the data itself.

Product Managers: Competitive Protocol Intelligence

The data need in practice: A product manager at a DeFi yield aggregator needs to know whether a competing protocol just launched a new incentivized liquidity pool that could pull users away from their platform. They need that information within hours, not weeks. Competitive monitoring in DeFi requires continuous Web3 data extraction of competing protocol dashboards, governance announcements, and on-chain event data.

Competitive intelligence products from cryptocurrency data scraping:

- Protocol TVL ranking by chain, refreshed daily, with week-over-week delta flagging

- DeFi yield rate matrix: current APY and APR across competing lending protocols by token, refreshed hourly

- Governance activity monitoring: new proposal submissions across major DAO platforms, with proposal text extraction and outcome tracking

- Exchange listing timeline tracking: new token listings across 50 to 200 exchanges with listing date, initial volume, and price impact data

- Feature availability matrix: which chains, tokens, and product features are live on competing platforms versus in development

- Token incentive program monitoring: active liquidity mining programs on competing protocols with emission rates, eligible pools, and program end dates

What this data unlocks: Product teams that implement systematic competitive intelligence through cryptocurrency data scraping report the ability to reduce feature gap discovery time from weeks (manual monitoring) to hours (automated extraction), enabling faster response to competitive moves that directly affect user retention.

Growth Teams: Community Intelligence and Narrative Timing

The data need in practice: A growth team at a Web3 gaming company needs to know which narrative is building in the GameFi community before launching their next campaign. They need to understand which influencer wallets are positioning in their token category, which Discord servers are growing fastest in their competitive set, and which keywords are gaining search volume in crypto media. This is a crypto market intelligence use case that requires continuous sentiment and community data extraction.

Growth intelligence products from cryptocurrency data scraping:

- Keyword volume trends across crypto media publications: article count by keyword category updated weekly

- Reddit community growth tracking: subscriber count and daily active user proxies for project-specific subreddits and major crypto forums

- Influencer wallet behavior monitoring: token purchase and sale activity for known high-credibility crypto community figures

- Token narrative clustering: unsupervised grouping of tokens by shared discussion themes in community channels, updated weekly

- Airdrop and incentive program database: active token distribution programs across the Web3 ecosystem, with eligibility criteria, participation requirements, and reward structures

The Data Quality Architecture for Scraped Crypto Data

Raw scraped data from crypto sources is not analytically usable without a structured quality processing pipeline. The complexity and scale of the crypto data landscape, with thousands of tokens, hundreds of venues, multiple chains, and inconsistent field standards across every source, make data quality design the most critical decision in a cryptocurrency data scraping program.

For context on data quality frameworks, see DataFlirt’s comprehensive guide to assessing data quality for scraped datasets.

Layer 1: Token Identity Resolution and Deduplication

A token listed as WBTC on one source, Wrapped Bitcoin on a second, and 0x2260FAC5E5542a773Aa44fBCfeDf7C193bc2C599 (its Ethereum contract address) on a third represents the same asset. Without canonical token identity resolution, a dataset covering 15,000 tokens from 500 sources will contain 50,000 to 100,000 rows for the same underlying universe. Deduplication in crypto data requires matching across ticker symbol, contract address, chain identifier, and project name simultaneously, with explicit conflict resolution rules for the substantial number of cases where multiple tokens share the same ticker.

Identity resolution requirements:

- Contract address as the canonical token identifier on each chain (not ticker symbol, which is not unique)

- Cross-chain bridge token mapping: USDC on Ethereum, USDC on Arbitrum, and USDC on Polygon are different contract addresses representing the same underlying asset

- Wrapped token resolution: WBTC and native BTC price parity monitoring rather than treating them as unrelated assets

- Delisted token handling: explicit records for tokens that have been delisted rather than silent row deletion

Layer 2: Price and Volume Normalization

Exchange-reported prices and volumes are not directly comparable without normalization. An exchange that reports volume in BTC terms is not comparable to one that reports in USDT terms without FX conversion at a consistent reference rate. An exchange that counts both sides of a trade as volume doubles the reported figure compared to one that counts only one side. Wash trading, which is endemic in certain market segments, inflates reported volumes without corresponding economic activity.

Normalization requirements for market data:

- USD denomination conversion using a consistent reference FX rate with explicit conversion source documentation

- Volume double-counting identification and adjustment across venues with known double-counting practices

- Wash trading indicator flagging based on order book depth to volume ratios and buyer/seller address reuse patterns

- Price source hierarchy: for tokens traded on multiple venues, a defined source priority for canonical price

- Gap flagging: explicit null records for time periods where data collection failed, rather than forward-filling or silent interpolation

Layer 3: Timestamp Standardization

Timestamp inconsistency is one of the most underappreciated data quality problems in cryptocurrency data scraping, and one of the most analytically destructive. Different exchanges report trade timestamps in different time zones, at different precision levels (seconds versus milliseconds), with different epoch reference points, and in some cases with intentional timestamp masking for latency advantage.

Timestamp standardization requirements:

- All timestamps normalized to UTC with millisecond precision

- Exchange-specific timestamp offset documentation for venues with known reporting delays

- Block timestamp versus event timestamp distinction for on-chain data (block time is not the same as the moment a transaction was submitted)

- Event ordering within a block for on-chain data where multiple transactions share the same block timestamp

Layer 4: Schema Standardization and Field Documentation

A comprehensive cryptocurrency data scraping program that sources data from 50 exchanges, 20 DeFi analytics portals, 10 blockchain explorers, and 15 social and media sources will encounter 95+ different data schemas for overlapping concepts. Schema standardization translates all source-specific formats into a single canonical output schema with explicit field-level documentation, null handling specifications, and version control.

DataFlirt’s recommended field completeness thresholds by use case:

| Use Case | Critical Field Completeness | Enrichment Field Completeness |

|---|---|---|

| Quant model training | 99%+ | 90%+ |

| Risk monitoring | 97%+ | 80%+ |

| Compliance screening | 99%+ | 70%+ |

| Product benchmarking | 92%+ | 65%+ |

| Growth intelligence | 88%+ | 55%+ |

| Market research | 85%+ | 45%+ |

Crypto and Web3 Portals to Scrape by Region and Data Category

The following table maps the highest-value publicly accessible cryptocurrency data sources for systematic Web3 data extraction programs in 2026. Coverage is organized by region and data category to reflect the geographic and regulatory variations that affect data availability and collection complexity.

| Region (Country) | Target Websites | Why Scrape? |

|---|---|---|

| Global: Price Aggregators | CoinGecko, CoinMarketCap, CoinPaprika, Nomics, LiveCoinWatch | Multi-exchange normalized price, volume, market cap, circulating supply, and historical OHLCV for 15,000+ tokens. Foundation layer for any crypto market intelligence program. Aggregators normalize across exchanges, reducing source-level normalization overhead. |

| Global: DEX and DeFi Analytics | DeFiLlama, DeBank, Token Terminal, Dune Analytics (public dashboards), Messari Protocol Profiles | TVL by protocol and chain, protocol revenue, fee generation, user counts, yield rates, governance token metrics, and DeFi ecosystem benchmarking data. Essential for DeFi product benchmarking and investment analysis. |

| Global: On-Chain Blockchain Explorers | Etherscan, BscScan, Solscan, Polygonscan, Arbiscan, Snowtrace (Avalanche), Basescan, Blockchair (multi-chain) | Transaction history, wallet balance snapshots, token transfer events, smart contract interactions, gas fee data, and entity attribution for compliance and risk use cases. Foundation for blockchain data collection programs. |

| Global: NFT Market Data | OpenSea (public listings), Blur (public data), Magic Eden, Rarible, LooksRare, NFTScan, CryptoSlam | Floor prices by collection, sales volume, trait rarity distributions, wash trading signals, holder concentration data, and listing depth analysis. Essential for NFT investment intelligence and market risk monitoring. |

| Global: Derivatives and Futures Data | Coinglass, Bybt, CryptoQuant (public metrics), Glassnode (free tier public dashboards) | Open interest, funding rates, long/short ratios, liquidation data, options market structure, and exchange net flow data. Critical for risk monitoring and quant signal research. |

| Global: Crypto News and Media | CoinDesk, CoinTelegraph, The Block, Decrypt, Blockworks, BeInCrypto, Crypto Briefing, CryptoSlate | Article publication frequency by token and topic, editorial sentiment signals, market-moving announcement tracking, and narrative cycle timing for growth and risk teams. |

| Global: Social and Community Intelligence | Reddit (public subreddit data via Pushshift mirrors and official API), BitcoinTalk forums (public), Bitcointalk.org alternative monitoring, CryptoTwitter search endpoints | Community mention volume, sentiment trends, influencer activity monitoring, emerging narrative detection, and community growth velocity for growth teams and risk sentiment monitoring. |

| Global: Governance and DAO Data | Snapshot (public governance portal), Tally, Boardroom, Commonwealth | Governance proposal text and voting outcomes, DAO participation rates, token holder voting behavior, and protocol governance health indicators for product and compliance teams. |

| United States | SEC EDGAR (crypto-related filings), CFTC public enforcement and registration data, FinCEN public reports, OFAC SDN list updates | Regulatory filing monitoring for listed crypto-linked companies, enforcement action tracking, sanctioned entity and wallet address updates, and compliance reference data for US-regulated entities. |

| European Union | ESMA public consultations and MiCA implementation guidance, EBA crypto-asset registers (as they develop), national FCA-equivalent public registers | MiCA compliance reference data, VASP registration status tracking across EU member states, regulatory guidance monitoring for compliance teams operating in Europe. |

| United Kingdom | FCA Financial Register (public), FCA crypto asset firm registration data | UK VASP registration status, FCA enforcement action monitoring, and compliance reference data for UK-regulated crypto businesses. |

| Global: Exchange Fee and Feature Intelligence | Public fee schedule pages of 200+ centralized exchanges, DEX protocol documentation pages, exchange listing announcement pages | Trading fee benchmarking, withdrawal fee comparison, maker/taker spread standards, listing requirement criteria, and feature availability matrices for product and growth teams. |

| Global: Token Project Intelligence | GitHub public repositories (commit frequency, contributor counts), CoinList and Polkastarter public IDO data, token unlock schedule aggregators, project whitepaper and documentation archives | Development activity metrics, token vesting schedule data, IDO and presale history, team credential signals, and tokenomics structure data for investment due diligence and risk analysis. |

| Asia-Pacific: Japan, South Korea, Singapore | FSA Japan public registration data, VASP registration portals, Bithumb and Upbit public market data (for KRW-denominated premium analysis), MAS Singapore public crypto license register | Asian regulatory compliance reference data, KRW premium analysis for cross-market arbitrage research, APAC exchange-specific market data for regional coverage programs. |

Delivery Formats That Actually Match Downstream Workflows

Data that arrives in the wrong format for the consuming team is data that does not get used, regardless of its technical quality. A critical and consistently underappreciated aspect of cryptocurrency data scraping program design is specifying delivery format and integration architecture before collection begins, not after.

For broader context on data delivery infrastructure, see DataFlirt’s overview of best databases for storing scraped data at scale and best cloud storage solutions for managing large scraped datasets.

For quant and data science teams: Parquet files partitioned by date and token, delivered to an S3 or GCS bucket with a defined directory convention. Parquet’s columnar format provides 5 to 10x storage compression over CSV for high-cardinality time series data and dramatically reduces query scan time for analytical workloads. Alternatively, direct incremental loads to BigQuery, Snowflake, or Redshift with defined partition schemes aligned to the team’s existing query patterns.

For risk monitoring teams: Structured JSON with explicit schema versioning, delivered to a Kafka topic or direct database insert for low-latency risk dashboard consumption. Alert-worthy signals (funding rate extremes, exchange inflow spikes, large whale movements) delivered separately as enriched event records with flag fields and contextual metadata, not embedded in the raw data stream.

For compliance teams: Structured CSV with explicit field documentation, delivered with a signed data provenance record that includes collection timestamp, source URLs, and schema version. Compliance data requires an unbroken audit chain from source to delivery. Delivery must include a human-readable data dictionary alongside the data file itself.

For product and growth teams: Enriched flat files with pre-computed delta fields (week-over-week change, rank shift, percentage deviation from benchmark), delivered to Google Sheets via automated load or to a BI tool such as Looker or Tableau via a scheduled database connection. The product team’s primary analytical tool should be the target of delivery format design.

For executive and strategy teams: Pre-aggregated summary datasets with defined KPI fields, delivered as scheduled reports or dashboard data inputs. Raw data delivery to strategy teams creates consumption friction that consistently results in the data not being used. Aggregation to the level of the strategic question is the correct design choice.

Legal and Ethical Guardrails for Cryptocurrency Data Scraping

Crypto web data collection operates under the same legal frameworks as any other form of web scraping, with several additional considerations specific to the nature of the data being collected.

For comprehensive context on the legal landscape, see DataFlirt’s analysis of data crawling ethics and best practices and is web crawling legal?.

Terms of Service Compliance: Most major crypto exchanges, aggregators, and analytics portals include ToS provisions restricting automated data collection. Enforceability varies by jurisdiction and by the nature of the restriction, but ToS violations create civil litigation risk even where the underlying data is publicly accessible. Any cryptocurrency data scraping program must include a ToS review for each target source before collection begins. Sources that explicitly prohibit data extraction in their ToS should be flagged for legal assessment before proceeding.

Personal Data in Crypto Contexts: On-chain wallet data is a nuanced personal data question that is evolving across jurisdictions. A wallet address is pseudonymous, not anonymous: it can be linked to an individual through exchange KYC data, IP address records, and off-chain data correlation. In jurisdictions where GDPR or equivalent data protection law applies, any blockchain data collection program that includes wallet-level data should receive a data protection impact assessment before collection commences, particularly for compliance programs where the explicit purpose includes re-identification of individuals.

Compliance Data Special Considerations: Blockchain data collection for compliance and AML purposes is subject to specific regulatory frameworks in each jurisdiction. The data collected, how it is stored, who has access to it, and how long it is retained must all be defined in a documented data governance policy aligned to applicable AML and data protection regulations before any collection begins.

robots.txt and Ethical Collection Practices: Ethical cryptocurrency data scraping programs respect robots.txt directives on target platforms, implement rate limiting that avoids degrading platform performance for legitimate users, and avoid collection techniques that circumvent technical access controls through unauthorized means.

Market Manipulation Considerations: Any cryptocurrency data scraping program that feeds directly into trading algorithms must be reviewed for potential market manipulation implications. Using scraped sentiment data to systematically amplify or suppress market signals in ways that distort price discovery may attract regulatory scrutiny in jurisdictions with developing crypto market manipulation frameworks.

Building Your Cryptocurrency Data Strategy: A Practical Framework

Before commissioning any cryptocurrency data scraping program, business teams should work through the following decision framework. It takes approximately 90 minutes of structured internal discussion and prevents the most common and expensive mistakes in crypto data acquisition.

Define the Business Decision

Start with the specific decision this data needs to power, not with the data you think you want. “We want crypto data” is not a decision definition. “We need to monitor exchange net flow data for our top 20 portfolio positions, updated every 4 hours, and deliver it as a risk alert to the portfolio management team when flow exceeds a defined threshold” is a decision definition. The specificity of the decision drives every subsequent architectural choice.

Map Required Data Types to the Decision

What specific data fields, at what geographic and protocol granularity, with what freshness requirement, does that decision actually require? This exercise frequently reveals that teams are requesting far more data than their decision requires, or that specific signals they need are not available from the obvious sources and require supplementary data acquisition.

Assess the Cadence Requirement

Is this a one-off or periodic need? If periodic, what is the minimum refresh cadence that keeps data analytically current for the target decision? Real-time scraping is significantly more expensive and technically complex than daily batch scraping. Specifying cadence with precision, rather than defaulting to “as fast as possible,” is one of the most consequential cost and complexity decisions in a cryptocurrency data scraping program design.

Define Data Quality Requirements Explicitly

What are the minimum acceptable field completeness rates for critical fields? What timestamp precision is required? What deduplication standard applies? What price normalization methodology is needed? Defining these thresholds before collection begins prevents the expensive discovery, halfway through a program, that the data quality delivered does not meet the analytical requirements.

Specify Delivery Format and Integration Target

How does this data need to arrive for the consuming team to use it without additional transformation? An analytically perfect dataset delivered in the wrong format to the wrong system is a dataset that will not be used. Delivery format specification is a function of the consuming workflow, not the data itself.

Assess Legal and Ethical Boundaries

Which specific sources are in scope? Do any require authentication for the target data? Does the data include personal or pseudonymous data at the wallet level? What is the applicable jurisdictional legal framework for the use case? These questions must be answered in consultation with legal counsel before any technical collection work begins.

DataFlirt’s Consultative Approach to Crypto and Web3 Data Delivery

DataFlirt approaches cryptocurrency data scraping engagements from the business outcome backward, not from the technical architecture forward. The starting question in every engagement is not “what sources can we crawl?” but “what decision does this data need to power, who is making that decision, and how frequently do they need updated data to make it well?”

For a one-off token due diligence project, this means defining precise coverage requirements, field completeness standards, and quality documentation needs up front, then delivering a single, well-documented, schema-consistent dataset with full data provenance rather than a raw extraction that requires weeks of internal processing.

For a continuous crypto market intelligence program serving a trading desk, this means designing a delivery architecture that integrates directly with the team’s existing data infrastructure, with defined refresh cadences, schema versioning policies that prevent breaking changes to downstream models, and automated quality monitoring at each delivery cycle.

For a compliance data program serving a regulated crypto exchange, this means building a delivery workflow that includes explicit source attribution, collection timestamps, version history, and audit-ready documentation for every data delivery, aligned to the regulatory documentation standards the compliance team must satisfy.

The technical infrastructure behind DataFlirt’s Web3 data extraction capability, including residential proxy infrastructure for geography-specific collection, JavaScript rendering capacity for dynamic analytics portals, rate limit management across high-sensitivity sources, and distributed crawl orchestration at scale, is the enabler of these outcomes. But it is not the point. The point is the data: normalized, complete, timely, and delivered in a format that eliminates friction between collection and decision.

Explore DataFlirt’s cryptocurrency-specific services at the cryptocurrency web scraping services page and learn more about turnkey data delivery options at the managed scraping services page.

For organizations evaluating an in-house cryptocurrency data scraping program against a managed delivery solution, DataFlirt’s detailed comparison on outsourced vs. in-house web scraping services covers the key decision dimensions.

Additional Reading from DataFlirt

For teams building out a broader data acquisition strategy alongside cryptocurrency data scraping, the following DataFlirt resources provide relevant context:

- Web Scraping for Stock Market Data: Signals and Strategy

- Web Data for Finance: Acquisition and Analytical Frameworks

- Sentiment Analysis for Business Growth: From Raw Text to Signal

- Datasets for Competitive Intelligence: A Buyer’s Guide

- Best Scraping Platforms for Building AI Training Datasets

- Alternative Data for Ecommerce and Investment Research

- Crypto Data Mining: On-Chain Intelligence for Trading Teams

- Web Scraping for Cryptocurrency Trading: Tactical and Strategic Applications

- Key Considerations When Outsourcing Your Web Scraping Project

- DataFlirt Cryptocurrency Web Scraping Services

Frequently Asked Questions

What is cryptocurrency data scraping and how is it different from using official exchange APIs?

Cryptocurrency data scraping is the automated, programmatic collection of publicly available market data, on-chain activity records, exchange order book snapshots, DeFi protocol metrics, social sentiment signals, and NFT floor prices from crypto exchanges, aggregator platforms, blockchain explorers, and Web3 analytics portals at scale. It is distinct from official API access because it captures breadth, historical depth, and cross-source coverage across thousands of sources that have no official API, or where official APIs are rate-limited, paywalled, or structurally incomplete for advanced analytical use cases. For business teams, it is the difference between a narrow, single-venue data window and a comprehensive market intelligence layer.

How do different teams inside a crypto company or financial institution use scraped crypto data?

Quant analysts use scraped order book and trade history data for signal generation and backtesting. Risk teams use scraped exchange inflow and outflow data for early warning on market stress events. Compliance officers use blockchain data collection to map wallet clusters and monitor for sanctioned address exposure. Product managers at Web3 platforms use crypto market intelligence to benchmark competitor protocol metrics and token incentive structures. Data leads use scraped DeFi and NFT data to train pricing models and market anomaly detectors. Growth teams use sentiment and community data to time narratives and identify emerging audience segments. Each role consumes the same underlying data sources through an entirely different analytical framework.

When should a crypto business invest in one-off scraping versus a continuous data feed?

One-off cryptocurrency data scraping is appropriate for investment thesis validation, token due diligence, protocol competitive landscape analysis, and point-in-time market structure research. Periodic scraping, running on cadences from minutes to daily depending on the use case, is required for price monitoring, DeFi yield rate tracking, NFT floor price surveillance, compliance screening data maintenance, sentiment trend monitoring, and any use case where data freshness directly drives a trading, product, or compliance decision.

What does data quality mean specifically for scraped cryptocurrency datasets?

Data quality in cryptocurrency data scraping requires token identity resolution and deduplication across ticker symbols, contract addresses, and chain identifiers; price and volume normalization across venues with different denomination conventions and double-counting practices; timestamp standardization to UTC with millisecond precision; and schema standardization across sources that use incompatible field naming conventions. Raw scraped crypto data without these quality layers produces analytical noise. A high-quality scraped crypto dataset should have critical field completeness above 95% for most use cases, timestamps consistent to the millisecond, and token deduplication accuracy above 97%.

What are the legal boundaries for cryptocurrency data scraping?

Cryptocurrency data scraping operates under the same general web scraping legal frameworks as any other domain: Terms of Service provisions on target platforms create civil litigation risk even for publicly accessible data; robots.txt directives represent ethical collection norms; and personal data collection, including pseudonymous wallet-level data, may trigger GDPR, CCPA, or equivalent data protection obligations depending on jurisdiction and collection purpose. Compliance data programs have additional obligations related to data governance, audit trail documentation, and retention policy alignment. Always conduct a legal review of target platforms, data types, and applicable jurisdictional law before initiating any cryptocurrency data scraping program.

In what formats can scraped cryptocurrency data be delivered to business teams?

Delivery formats are entirely a function of the consuming team’s workflow. Quant and data science teams typically receive Parquet files delivered to cloud storage or direct loads to columnar data warehouses. Risk monitoring teams receive structured JSON feeds with automated quality check reports and threshold-based alert records. Compliance teams receive structured CSV files with explicit data provenance documentation and audit chain records. Product and growth teams receive pre-computed delta files delivered to BI tools or Google Sheets. The delivery format is a design decision made before collection begins, not after, and must be aligned to the consuming team’s existing analytical workflow to ensure the data actually gets used.